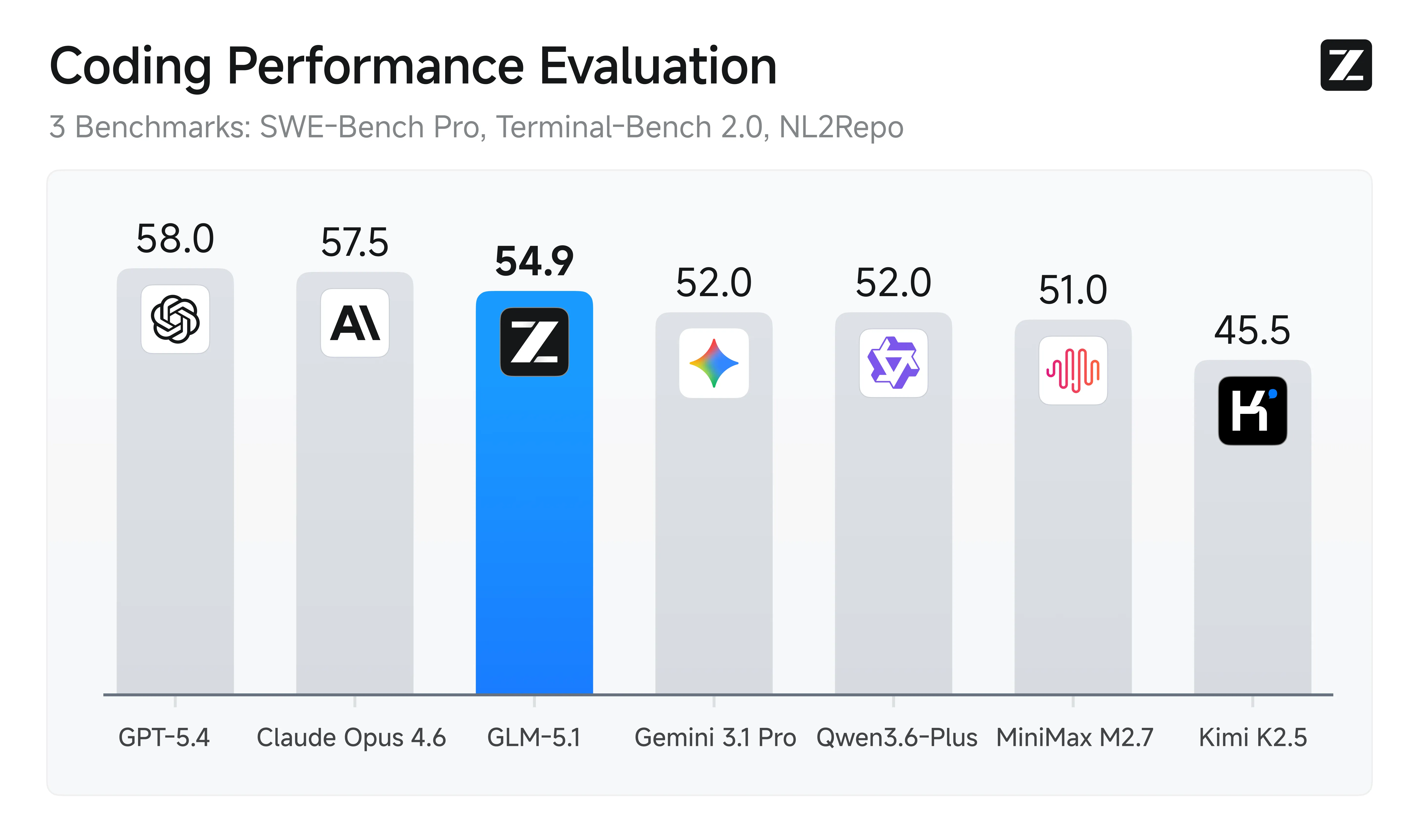

Chinese artificial intelligence is making headlines. In just a few months, models like Zhipu AI's GLM, Alibaba's Qwen, and DeepSeek have climbed international rankings, now rivaling American giants OpenAI and Anthropic. Four Chinese models even rank among the top ten on SWE-bench, a benchmark specialized in software engineering.

Yet, despite these impressive performances, widespread adoption in the West remains slow. Let's break down the barriers and opportunities.

Structural Mistrust Around Data Protection

The first obstacle is both cultural and regulatory. In Europe, the GDPR imposes a strict framework for processing personal data, and any company handling data from European residents must comply, even if based outside the EU. However, China's legal framework, though evolving with the 2021 PIPL law, is still perceived as less protective by Western businesses.

France, for instance, has requested explanations from DeepSeek regarding the compliance of its data processing with European law. This legal uncertainty naturally hinders enterprise deployments, where data sovereignty is a strategic priority.

Excellent Raw Performance, but Integration Still Needs Work

On paper, Chinese models shine: GLM-4.7 achieves 73.8% on SWE-bench Verified, ranking first among open-source models. Kimi K2 scores 97.4% on MATH-500 and 65.8% on SWE-bench. But raw performance isn't enough.

Seamless integration into Western development tools (VS Code, JetBrains, CI/CD platforms) remains a challenge. While GLM claims compatibility with over 20 tools like Cursor or Cline, field reports indicate these connections can be unstable, with API errors or message format incompatibilities. For a busy developer, reliability trumps benchmark scores.

Early User Feedback: Bugs, Latency, Hallucinations

Western early adopters, often independent developers or small teams, report mixed experiences. On specialized forums, some mention recurring bugs, variable latency depending on time zones, and persistent hallucinations in generated responses.

Even if GLM-5 reportedly achieves a record-low hallucination rate according to its publisher, real-world production performance may differ, especially when the model spontaneously switches to Chinese mid-conversation in English. These technical frictions, minor but cumulative, discourage broader adoption.

A Price-to-Performance Ratio Hard to Ignore

This is the most tangible argument: Chinese models are often cheaper. Kimi offers a max subscription at $159/month, and on Z.ai's subscription page, the GLM Coding Max plan is priced at $160/month, whereas an equivalent Claude subscription (Max 20x) costs $200/month. For a startup or freelancer, this saving can be significant. When performance is comparable on certain tasks, the economic choice becomes rational, especially when R&D budgets are tight.

An Innovation Race That Benefits Everyone

The rise of Chinese players is stimulating global competition. When DeepSeek released a high-performing, low-cost open-source model in January 2025, it forced American labs to accelerate their own iterations.

This dynamic ultimately benefits the entire market: lower prices, improved features, and diversification of technical approaches. Western developers can now draw from a richer ecosystem, mixing models according to their specific needs.

China's Strategy: Arrive Late, Then Dominate

China has a remarkable track record of technological catch-up: it entered e-commerce, mobile payments, and electric vehicles after early movers, only to eventually dominate these markets. AI is likely following the same pattern. With its "AI+" plan targeting 70% AI integration across the economy by 2027 and 90% by 2030, Beijing is making artificial intelligence an absolute national priority.

China's 15th Five-Year Plan (2026–2030) mentions AI over 50 times, compared to just 11 in the previous plan. This state-level mobilization, combined with a vast pool of engineers and data, creates the conditions for a sustainable breakthrough.

Conclusion: Gradual but Inevitable Adoption?

Chinese AI models aren't yet replacing GPT-5 or Claude Opus in Western enterprises, but they are establishing themselves as credible alternatives, particularly for technical use cases, coding tasks, or budget-constrained projects. Concerns about data, integration friction, and mixed feedback from pioneers are slowing adoption, but not stopping it.

As APIs stabilize, compliance certifications advance, and pricing remains aggressive, Chinese models are likely to gain market share, first at the periphery, then at the core of professional workflows. After all, competition drives innovation, and everyone benefits.